Add your promotional text...

The Double Mental Load of Artificial Intelligence: Thinking on Behalf of the Brainless Tool

Artificial intelligence has boosted productivity for some — most notably by dramatically streamlining code writing. Yet the tool now seems to be generating its own bottlenecks: both at the individual level and across entire organizations. This is what I call the double mental load of AI. Could the productivity gains we were promised end up canceling themselves out?

Naomi Roth

March 9, 2026 · 8 min read

An Unstable Recipe

Write faster, think faster... offload low-value tasks so we can focus on more complex problems: produce more, but above all, produce better — that's the promise. Many people have attested to real gains, at least initially.

Developers are churning out code at breakneck speed. AI-assisted coding tools have even fueled the explosion of vibe coding — the phenomenon of complete beginners managing to build functional software through specialized interfaces, despite having no formal training. Business leaders tell me their legal files have doubled in volume since generative AI models arrived on the scene. Those same leaders, along with their teams, often use AI to draft or review contracts (possibly a mistake — but that's a separate conversation).

Communications professionals are cranking out LinkedIn posts and newsletters like they're breathing. And the romance novelist has put down their pen entirely, reinventing themselves as the supervisor of an army of AI agents that allows them to publish 200 books a year instead of 10 (yes, that was already a lot).

In short, the watchword seems to be acceleration.

This rapid, widespread adoption — still, it bears repeating, still unmastered — has given rise in certain fields to a new task: reviewing AI-generated content. In some professions, this review process is even gradually displacing original, purely human intellectual production. Where people once created, they now proofread. It's a shift with real consequences, chief among them the emergence of what I'd call a double mental load. A phenomenon that calls into question, if not outright undermines, some of the gains that were initially celebrated.

Ingredient One: We've Become AI Moderators

Explaining this paradoxical productivity loss requires first understanding that the nature of work fundamentally shifts the moment AI becomes one of the primary tools available to a worker. Once it's widely used in their immediate professional environment — by colleagues or clients — the very nature of the information they handle day-to-day shifts.

It may not have escaped your notice that slop was named Word of the Year. The term refers to meaningless, AI-generated content, and draws a fairly obvious comparison to junk food: slop is to information what fast food is to cooking.

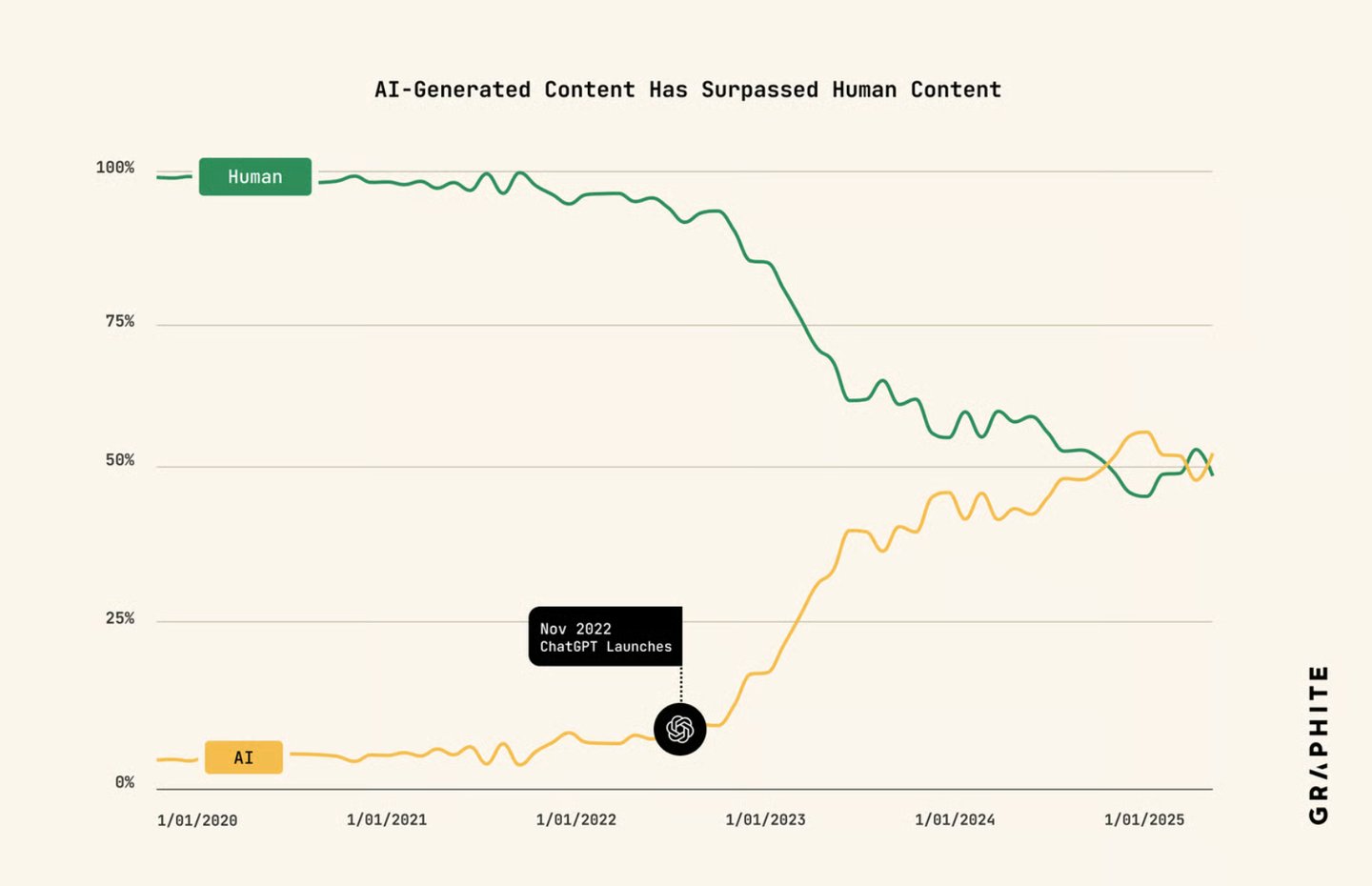

An estimated 51% of content produced today is artificially generated. (Source: Graphite, "More Articles Are Now Created by AI Than Humans," 2025)

And while slop initially described a phenomenon spotted on social media, it has since expanded its natural habitat — including inside organizations, where it spreads like an invasive species: quickly, at the expense of whatever ecosystem was already in place. It has even given rise to a subspecies known as workslop.

The invasion is all the more insidious because AI-generated output looks polished at first glance. In 2025, 66% of users shared AI-generated content without first reading it. According to this same survey cited in a Harvard Business Review article, 40% of American workers reported receiving workslop from their colleagues.

It's an experience that is equal parts baffling and frustrating, because it forces the recipient to redo all or part of the work — effectively thinking on behalf of the sender and the tool that was supposed to help them in the first place.

With workslop on the rise, entire dead-end chains can form where everyone's time is wasted, and almost no value is produced: one person generating hollow content, another correcting at a loss. There's even a measurable cost: on average, each workslop incident takes recipients nearly two hours to fix — which, by some estimates, amounts to roughly $9 million in lost productivity per year.

Because the tool moves faster than humans, the temptation grows to generate first and fix later. But that opens the door to proofreading errors, oversights, and negligence that ultimately prove costly.

Which brings us to a second issue: workslop or not, the wave of content that AI produces quickly and effortlessly places a new, silent burden on the teams now responsible for validating it. Becoming a moderator of the machine's output means dealing with a tool that can generate content faster than any human can review it. Artificially generated content can pile up in the review queue well beyond a team's capacity to process it.

This is how organizational bottlenecks form: on one end, a link in the chain sees its output accelerate; on the other, pressure builds, and teams struggle to absorb, digest, or sign off on the flow. The time savings generated upstream are canceled out, partially or entirely. It's a gridlock.

Ingredient Two: Reviewing AI-Generated Content Is More Exhausting Than Reviewing Human-Written Content

Your first instinct might be that reviewing someone else's work is less tiring than producing your own. After all, it's just a matter of signing off on something the AI has already chewed up and digested. And yet, the opposite is true — even in cases where the AI hasn't produced workslop, but something that genuinely looks good.

In his excellent piece Too Fast To Think, senior developer Stephan Schmidt captures this emerging fatigue in his own words. The problem, he argues, comes down to the rapid-fire succession of reviews and validations:

Even though his productivity has technically gone up, he hits a cognitive wall earlier in the day — one that stops him cold. "I need to stop for a moment before I can continue," he writes.

To evaluate the AI's work, the professional is not actually spared the effort of thinking. They have to retrace the machine's steps, evaluate each suggestion, and make sure it actually holds up in the real world before signing off. A different kind of thinking — or an additional layer of thinking: humans still have to think for the machine today.

Steve Yegge — formerly of Google and Amazon, among others — says that since AI-assisted coding arrived, he's been hit by inexplicable nap attacks. He describes the same additional load felt when reading machine-generated work: sometimes collapsing with fatigue after just an hour of work. The phrasing might make you smile, but it adds to a growing body of evidence that calls the productivity picture into question.

This fatigue is something I've heard about repeatedly over the past two years, across very different professions — including some you'd never expect to be caught up in software development concerns, like lawyers. Several of them described the same experience: a mental load that, far from lightening, only grows.

Picture an AI-drafted contract: a time-saver, right? And yet, even when the structure looks sound at first glance, the devil is in the details — a clause that contradicts another, a legal logic that doesn't hold up... or any number of unpleasant surprises quietly simmering beneath the surface, if the human professional doesn't go through it with a fine-tooth comb.

The underlying problem remains the same: the machine cannot think for the human, and the human cannot process text as fast as the machine. Two forces in tension, producing an individual bottleneck — the fatigue of thinking for the AI, of thinking twice. The AI's output appears polished and complete, yet picking up the thread requires a cognitive effort that sometimes exceeds what writing the work from scratch would have taken.

This not only calls into question the productivity gains being advertised — it also raises the question: how useful is the machine, really, if it creates mental and organizational gridlock?

The Result: A Double Mental Load

Let's be clear: AI is not a tool that gives everyone wings, all the time, across every type of output. That's a misconception worth challenging, and the double mental load is a concrete illustration of why.

On one hand, when AI-generated content must be reviewed by humans and turns out to be poor quality, it doesn't reduce the mental load — it simply shifts and amplifies it. On the other hand, even when the output is genuinely good, the knowledge worker still finds themselves thinking for the machine. Fighting workslop and AI fatigue daily means being forced to think on behalf of a tool with no brain, while, from the outside, the perceived value-add of the human keeps diminishing.

Productivity isn't measured at the end of a single task. It's measured at the end of the chain. What if these gains prove to be marginal, but lead to higher staff turnover, or to a cognitive debt that eventually becomes unmanageable? Because the bottlenecks are already here, sometimes invisible: more people submitting code, articles, or legal files than there are people available and willing to review them seriously.

AI will never bear the consequences of its own output. Humans will. And so it falls on the human — silently, in advance — to carry a responsibility that neither the machine nor its makers can yet share, one that adds itself, layer by layer, to their mental load.

And what do they get in return?

The temptation grows to lower the guard and trust AI output by default. To stop questioning its blind spots. To stop reading. To stop validating. To finally ease the mental load by leaning on the machine — regardless of its actual performance. This is the well-documented automation bias.

There's a saying in Silicon Valley: "Solve the problem of intelligence, and you solve every problem." That problem is, in fact, not yet solved. Intelligence remains of human origin — and that is precisely why it deserves to be protected, not squandered correcting slop, or eroded by falling out of practice.

The answers are still open questions: better information hygiene, cultivating critical thinking toward technical systems, training programs, clear usage guidelines, human-in-the-loop design with guardrails to counter automation bias, process redesign, exploring autonomation and human-centered approaches, the four-day workweek, profit-sharing tied to AI-generated value... as many bets on the future.

Naomi Roth

"With traditional coding, the speed of your output matches the complexity of the task and the speed of your coding. This gives your brain time to process what is happening. With vibe coding the coding happens so fast, that my brain can’t process what is happening in real time, and thoughts are getting clogged up. Complex tasks are compressed into seconds or minutes. My brain does not get the baking time to mentally process architecture, decisions and edge cases the AI creates - not enough time to put the AI output into one coherent picture. I’m running a marathon at the pace of a sprint - speeds don’t match. (…) You get fatigue - instead of the usually happiness and satisfaction of coding."